Qkseo Forum

You are not logged in.

- Topics: Active | Unanswered

Pages: 1

#1 Test forum » DeepSeek: the Chinese aI App that has the World Talking » 2025-02-01 12:12:14

- Darrin54C

- Replies: 0

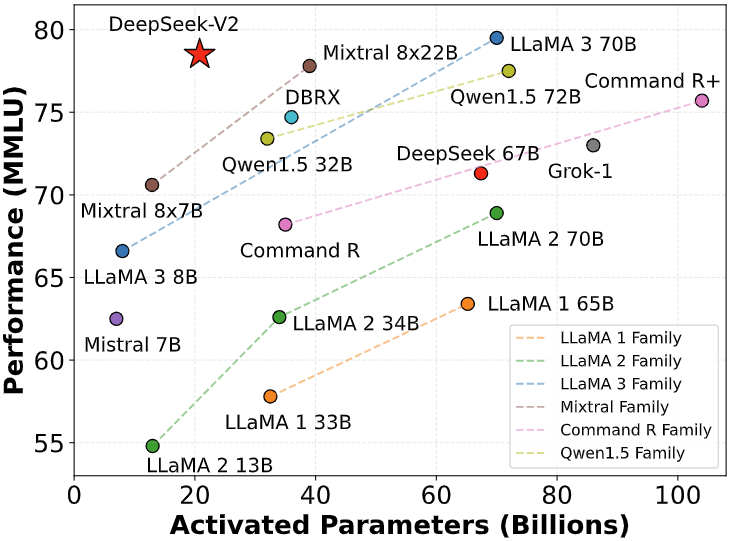

A Chinese-made artificial intelligence (AI) design called DeepSeek has shot to the top of Apple Store's downloads, sensational investors and sinking some tech stocks.

Its newest version was released on 20 January, quickly impressing AI specialists before it got the attention of the entire tech industry - and the world.

US President Donald Trump stated it was a "wake-up call" for US companies who must concentrate on "completing to win".

What makes DeepSeek so special is the business's claim that it was developed at a portion of the cost of industry-leading models like OpenAI - because it uses fewer sophisticated chips.

That possibility triggered chip-making giant Nvidia to shed nearly $600bn (₤ 482bn) of its market worth on Monday - the biggest one-day loss in US history.

DeepSeek also raises concerns about Washington's efforts to contain Beijing's push for tech supremacy, given that one of its essential constraints has actually been a ban on the export of innovative chips to China.

Beijing, however, has actually doubled down, with President Xi Jinping declaring AI a leading concern. And start-ups like DeepSeek are important as China rotates from traditional manufacturing such as clothes and furnishings to innovative tech - chips, electric vehicles and AI.

So what do we understand about DeepSeek?

Beware with DeepSeek, Australia says - so is it safe to utilize?

DeepSeek vs ChatGPT - how do they compare?

China's DeepSeek AI shakes market and dents America's swagger

What is synthetic intelligence?

AI can, at times, make a computer system look like an individual.

A machine uses the innovation to learn and resolve issues, usually by being trained on huge quantities of information and identifying patterns.

Completion outcome is software that can have discussions like an individual or predict individuals's shopping habits.

In the last few years, it has ended up being best understood as the tech behind chatbots such as ChatGPT - and DeepSeek - also called generative AI.

These programs again gain from big swathes of data, consisting of online text and images, to be able to make new material.

But these tools can create fallacies and frequently duplicate the predispositions consisted of within their training data.

Millions of individuals use tools such as ChatGPT to assist them with everyday jobs like composing emails, summing up text, and addressing concerns - and others even utilize them to assist with basic coding and studying.

DeepSeek is the name of a free AI-powered chatbot, which looks, feels and works very much like ChatGPT.

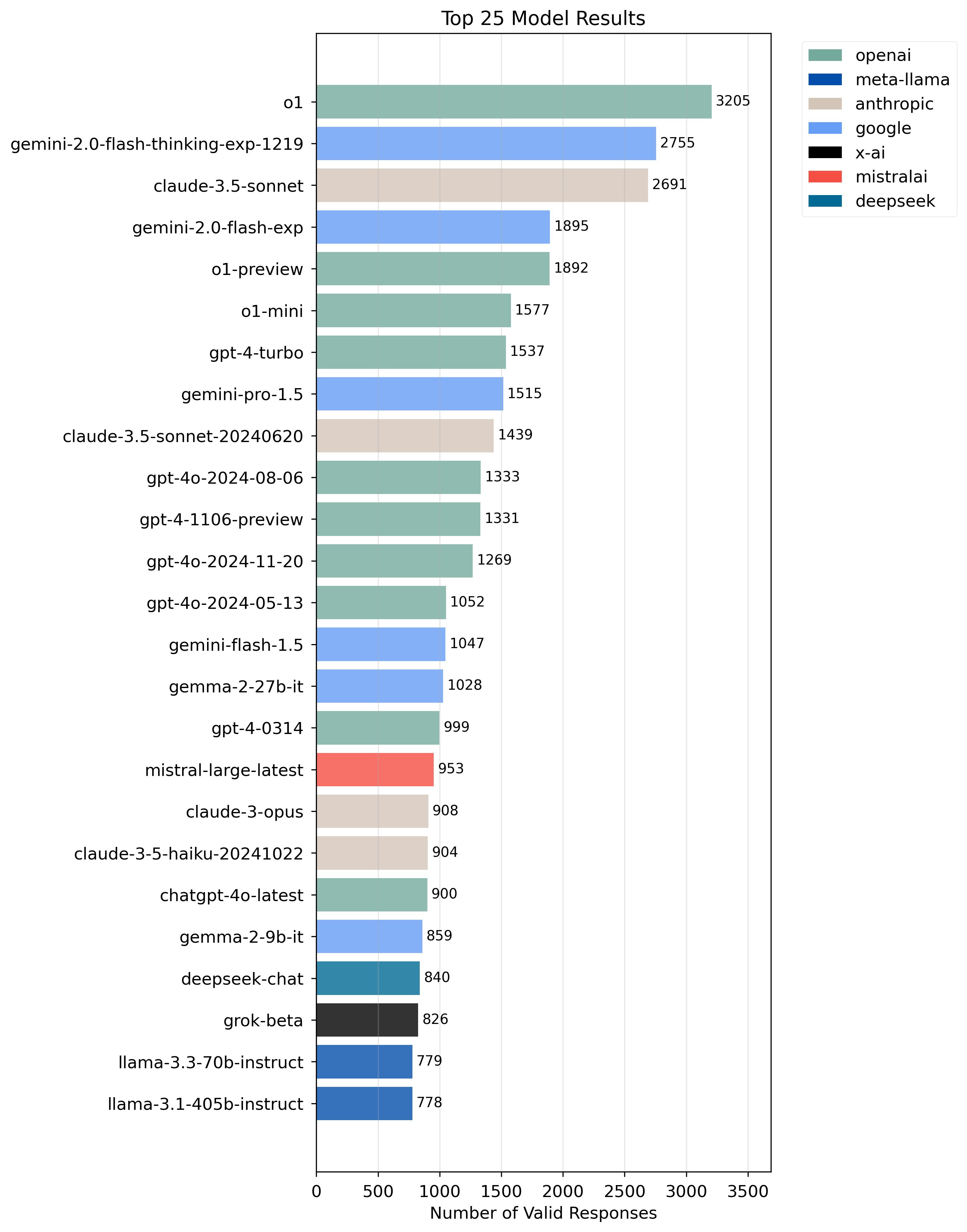

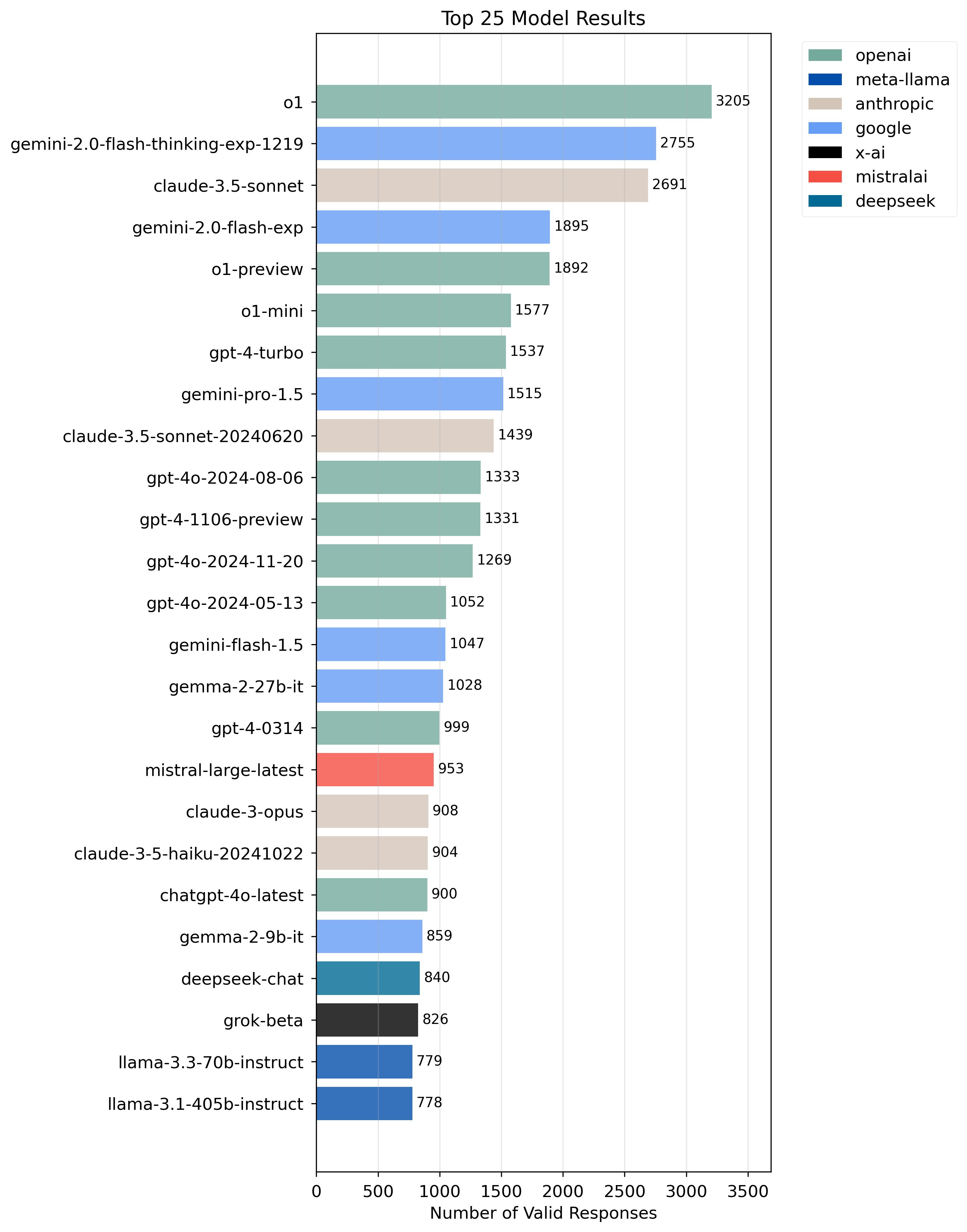

That implies it's utilized for a lot of the very same tasks, though precisely how well it works compared to its competitors is up for debate.

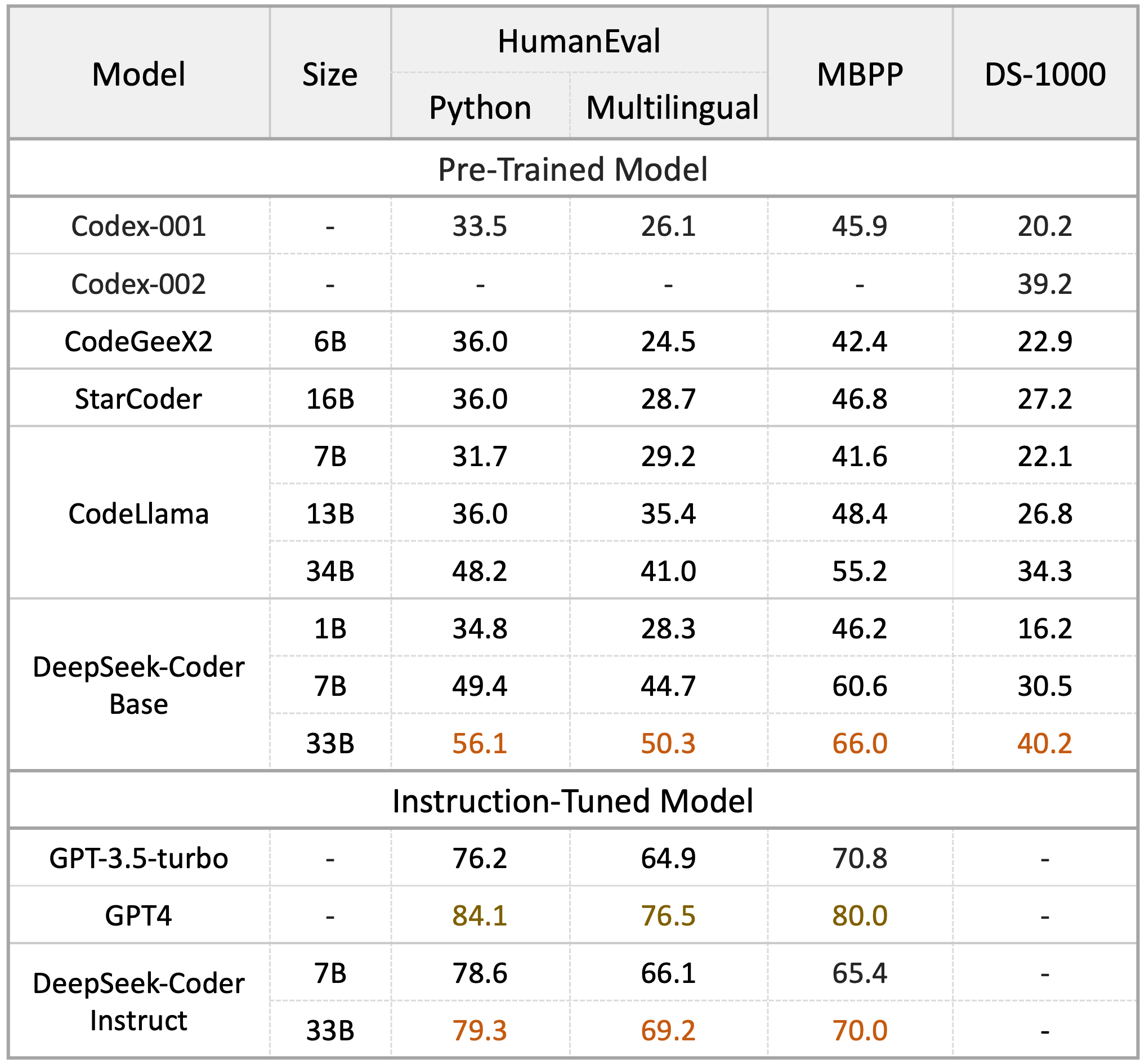

It is reportedly as effective as OpenAI's o1 design - released at the end of in 2015 - in jobs including mathematics and coding.

Like o1, R1 is a "reasoning" model. These models produce responses incrementally, mimicing a process similar to how people factor through issues or concepts. It utilizes less memory than its competitors, eventually lowering the cost to carry out tasks.

Like lots of other Chinese AI designs - Baidu's Ernie or Doubao by ByteDance - DeepSeek is trained to avoid politically delicate concerns.

When the BBC asked the app what happened at Tiananmen Square on 4 June 1989, DeepSeek did not offer any details about the massacre, a taboo subject in China.

It replied: "I am sorry, I can not respond to that concern. I am an AI assistant created to provide practical and harmless reactions."

Chinese government censorship is a substantial difficulty for its AI goals internationally. But DeepSeek's base design appears to have actually been trained via precise sources while introducing a layer of censorship or withholding specific information via an additional safeguarding layer.

Deepseek says it has actually been able to do this cheaply - researchers behind it declare it cost $6m (₤ 4.8 m) to train, a portion of the "over $100m" mentioned by OpenAI manager Sam Altman when talking about GPT-4.

DeepSeek's creator supposedly developed a shop of Nvidia A100 chips, which have been prohibited from export to China since September 2022.

Some specialists believe this collection - which some quotes put at 50,000 - led him to construct such an effective AI model, by combining these chips with more affordable, less advanced ones.

The exact same day DeepSeek's AI assistant ended up being the most-downloaded free app on Apple's App Store in the US, it was struck with "massive destructive attacks", the business said, triggering the business to short-lived limit registrations.

It was also struck by failures on its site on Monday.

Who is behind DeepSeek?

DeepSeek was founded in December 2023 by Liang Wenfeng, and launched its first AI big language model the following year.

Very little is learnt about Liang, who graduated from Zhejiang University with degrees in electronic info engineering and computer technology. But he now discovers himself in the global spotlight.

He was recently seen at a meeting hosted by China's premier Li Qiang, showing DeepSeek's growing prominence in the AI market.(1).pngL.jpg)

Unlike numerous American AI entrepreneurs who are from Silicon Valley, Mr Liang likewise has a background in finance.

He is the CEO of a hedge fund called High-Flyer, which utilizes AI to evaluate monetary information to make investment decisons - what is called quantitative trading. In 2019 High-Flyer became the very first quant hedge fund in China to raise over 100 billion yuan ($13m).

#2 Test forum » Symbolic Artificial Intelligence » 2025-02-01 11:46:58

- Darrin54C

- Replies: 0

In artificial intelligence, symbolic expert system (also referred to as classical synthetic intelligence or logic-based expert system) [1] [2] is the term for the collection of all methods in artificial intelligence research study that are based upon top-level symbolic (human-readable) representations of problems, logic and search. [3] Symbolic AI utilized tools such as reasoning programming, production rules, semantic webs and frames, and it developed applications such as knowledge-based systems (in particular, skilled systems), symbolic mathematics, automated theorem provers, ontologies, the semantic web, and automated preparation and scheduling systems. The Symbolic AI paradigm resulted in influential concepts in search, symbolic programs languages, agents, multi-agent systems, the semantic web, and the strengths and limitations of official understanding and thinking systems.

Symbolic AI was the dominant paradigm of AI research from the mid-1950s up until the mid-1990s. [4] Researchers in the 1960s and the 1970s were encouraged that symbolic approaches would eventually be successful in developing a maker with artificial general intelligence and considered this the supreme objective of their field. [citation needed] An early boom, with early successes such as the Logic Theorist and Samuel's Checkers Playing Program, caused unrealistic expectations and pledges and was followed by the first AI Winter as moneying dried up. [5] [6] A 2nd boom (1969-1986) took place with the increase of specialist systems, their guarantee of catching business know-how, and a passionate corporate welcome. [7] [8] That boom, and some early successes, e.g., with XCON at DEC, was followed once again by later on dissatisfaction. [8] Problems with problems in understanding acquisition, maintaining large understanding bases, and brittleness in managing out-of-domain problems emerged. Another, second, AI Winter (1988-2011) followed. [9] Subsequently, AI researchers concentrated on attending to underlying problems in handling unpredictability and in understanding acquisition. [10] Uncertainty was attended to with formal methods such as hidden Markov designs, Bayesian reasoning, and analytical relational learning. [11] [12] Symbolic maker discovering resolved the knowledge acquisition issue with contributions consisting of Version Space, Valiant's PAC knowing, Quinlan's ID3 decision-tree knowing, case-based learning, and inductive logic programming to find out relations. [13]

Neural networks, a subsymbolic method, had been pursued from early days and reemerged strongly in 2012. Early examples are Rosenblatt's perceptron learning work, the backpropagation work of Rumelhart, Hinton and Williams, [14] and operate in convolutional neural networks by LeCun et al. in 1989. [15] However, neural networks were not considered as successful until about 2012: "Until Big Data became prevalent, the basic agreement in the Al community was that the so-called neural-network technique was hopeless. Systems just didn't work that well, compared to other techniques. ... A revolution can be found in 2012, when a variety of people, including a group of scientists working with Hinton, worked out a method to utilize the power of GPUs to enormously increase the power of neural networks." [16] Over the next a number of years, deep knowing had magnificent success in managing vision, speech recognition, speech synthesis, image generation, and machine translation. However, because 2020, as intrinsic troubles with bias, description, coherence, and toughness ended up being more evident with deep learning methods; an increasing number of AI researchers have actually required integrating the very best of both the symbolic and neural network methods [17] [18] and resolving locations that both methods have problem with, such as sensible thinking. [16]

A brief history of symbolic AI to today day follows below. Time durations and titles are drawn from Henry Kautz's 2020 AAAI Robert S. Engelmore Memorial Lecture [19] and the longer Wikipedia article on the History of AI, with dates and titles differing a little for increased clarity.

The first AI summertime: irrational spirit, 1948-1966

Success at early efforts in AI happened in three main locations: artificial neural networks, understanding representation, and heuristic search, contributing to high expectations. This area summarizes Kautz's reprise of early AI history.

Approaches influenced by human or animal cognition or behavior

Cybernetic approaches tried to duplicate the feedback loops in between animals and their environments. A robotic turtle, with sensors, motors for driving and guiding, and seven vacuum tubes for control, based on a preprogrammed neural web, was constructed as early as 1948. This work can be viewed as an early precursor to later work in neural networks, reinforcement learning, and positioned robotics. [20]

A crucial early symbolic AI program was the Logic theorist, written by Allen Newell, Herbert Simon and Cliff Shaw in 1955-56, as it had the ability to show 38 primary theorems from Whitehead and Russell's Principia Mathematica. Newell, Simon, and Shaw later on generalized this work to develop a domain-independent problem solver, GPS (General Problem Solver). GPS solved issues represented with formal operators through state-space search utilizing means-ends analysis. [21]

During the 1960s, symbolic approaches achieved terrific success at imitating smart habits in structured environments such as game-playing, symbolic mathematics, and theorem-proving. AI research study was concentrated in 4 organizations in the 1960s: Carnegie Mellon University, Stanford, MIT and (later on) University of Edinburgh. Every one established its own design of research. Earlier approaches based on cybernetics or artificial neural networks were deserted or pushed into the background.

Herbert Simon and Allen Newell studied human analytical skills and tried to formalize them, and their work laid the foundations of the field of expert system, along with cognitive science, operations research study and management science. Their research study group utilized the results of mental experiments to establish programs that simulated the methods that people used to resolve problems. [22] [23] This tradition, centered at Carnegie Mellon University would ultimately culminate in the development of the Soar architecture in the middle 1980s. [24] [25]

Heuristic search

In addition to the extremely specialized domain-specific kinds of understanding that we will see later on utilized in professional systems, early symbolic AI researchers discovered another more general application of understanding. These were called heuristics, guidelines that direct a search in appealing instructions: "How can non-enumerative search be practical when the underlying problem is tremendously hard? The approach promoted by Simon and Newell is to employ heuristics: quick algorithms that might stop working on some inputs or output suboptimal options." [26] Another important advance was to discover a way to apply these heuristics that guarantees an option will be discovered, if there is one, not holding up against the periodic fallibility of heuristics: "The A * algorithm offered a general frame for complete and ideal heuristically directed search. A * is utilized as a subroutine within virtually every AI algorithm today but is still no magic bullet; its guarantee of efficiency is purchased the cost of worst-case rapid time. [26]

Early deal with understanding representation and thinking

Early work covered both applications of official thinking stressing first-order reasoning, together with attempts to manage common-sense thinking in a less formal way.

Modeling formal reasoning with logic: the "neats"

Unlike Simon and Newell, John McCarthy felt that devices did not need to replicate the precise systems of human idea, but might rather search for the essence of abstract thinking and analytical with reasoning, [27] no matter whether individuals used the exact same algorithms. [a] His lab at Stanford (SAIL) concentrated on using formal logic to resolve a wide array of problems, including understanding representation, planning and learning. [31] Logic was likewise the focus of the work at the University of Edinburgh and somewhere else in Europe which led to the advancement of the programs language Prolog and the science of logic programming. [32] [33]

Modeling implicit sensible knowledge with frames and scripts: the "scruffies"

Researchers at MIT (such as Marvin Minsky and Seymour Papert) [34] [35] [6] found that resolving tough problems in vision and natural language processing required advertisement hoc solutions-they argued that no basic and basic concept (like reasoning) would record all the elements of smart habits. Roger Schank explained their "anti-logic" techniques as "scruffy" (instead of the "cool" paradigms at CMU and Stanford). [36] [37] Commonsense understanding bases (such as Doug Lenat's Cyc) are an example of "shabby" AI, because they should be built by hand, one complicated principle at a time. [38] [39] [40]

The first AI winter season: crushed dreams, 1967-1977

The very first AI winter was a shock:

During the first AI summer, lots of people believed that device intelligence might be attained in simply a couple of years. The Defense Advance Research Projects Agency (DARPA) launched programs to support AI research study to use AI to fix issues of nationwide security; in particular, to automate the translation of Russian to English for intelligence operations and to develop autonomous tanks for the battleground. Researchers had actually started to understand that achieving AI was going to be much harder than was supposed a years earlier, but a combination of hubris and disingenuousness led lots of university and think-tank scientists to accept financing with promises of deliverables that they need to have understood they might not meet. By the mid-1960s neither helpful natural language translation systems nor autonomous tanks had actually been produced, and a dramatic backlash embeded in. New DARPA leadership canceled existing AI funding programs.

Beyond the United States, the most fertile ground for AI research study was the UK. The AI winter season in the UK was spurred on not so much by disappointed military leaders as by competing academics who saw AI researchers as charlatans and a drain on research study funding. A teacher of applied mathematics, Sir James Lighthill, was commissioned by Parliament to examine the state of AI research in the country. The report specified that all of the problems being worked on in AI would be better dealt with by researchers from other disciplines-such as applied mathematics. The report also claimed that AI successes on toy problems could never ever scale to real-world applications due to combinatorial explosion. [41]

The second AI summertime: knowledge is power, 1978-1987

Knowledge-based systems

As constraints with weak, domain-independent approaches ended up being increasingly more obvious, [42] scientists from all three traditions started to develop knowledge into AI applications. [43] [7] The understanding revolution was driven by the awareness that understanding underlies high-performance, domain-specific AI applications.

Edward Feigenbaum said:

- "In the knowledge lies the power." [44]

to explain that high efficiency in a particular domain needs both general and extremely domain-specific knowledge. Ed Feigenbaum and Doug Lenat called this The Knowledge Principle:

( 1) The Knowledge Principle: if a program is to perform a complicated job well, it should know a good deal about the world in which it operates.

( 2) A plausible extension of that concept, called the Breadth Hypothesis: there are 2 extra abilities required for intelligent behavior in unanticipated situations: falling back on progressively general understanding, and analogizing to particular however remote understanding. [45]

Success with professional systems

This "understanding revolution" led to the advancement and implementation of professional systems (introduced by Edward Feigenbaum), the very first commercially successful type of AI software application. [46] [47] [48]

Key specialist systems were:

DENDRAL, which found the structure of natural particles from their chemical formula and mass spectrometer readings.

MYCIN, which detected bacteremia - and suggested additional laboratory tests, when required - by analyzing laboratory results, patient history, and medical professional observations. "With about 450 rules, MYCIN was able to carry out along with some experts, and considerably much better than junior doctors." [49] INTERNIST and CADUCEUS which tackled internal medication medical diagnosis. Internist attempted to catch the know-how of the chairman of internal medication at the University of Pittsburgh School of Medicine while CADUCEUS might ultimately identify approximately 1000 various diseases.

- GUIDON, which demonstrated how an understanding base developed for expert problem resolving could be repurposed for teaching. [50] XCON, to configure VAX computers, a then laborious procedure that could use up to 90 days. XCON minimized the time to about 90 minutes. [9]

DENDRAL is thought about the very first professional system that relied on knowledge-intensive analytical. It is explained listed below, by Ed Feigenbaum, from a Communications of the ACM interview, Interview with Ed Feigenbaum:

Among the people at Stanford interested in computer-based models of mind was Joshua Lederberg, the 1958 Nobel Prize winner in genetics. When I told him I desired an induction "sandbox", he stated, "I have simply the one for you." His laboratory was doing mass spectrometry of amino acids. The question was: how do you go from taking a look at the spectrum of an amino acid to the chemical structure of the amino acid? That's how we started the DENDRAL Project: I was proficient at heuristic search methods, and he had an algorithm that was proficient at producing the chemical issue area.

We did not have a grandiose vision. We worked bottom up. Our chemist was Carl Djerassi, innovator of the chemical behind the birth control tablet, and also one of the world's most appreciated mass spectrometrists. Carl and his postdocs were first-rate specialists in mass spectrometry. We started to contribute to their knowledge, developing knowledge of engineering as we went along. These experiments totaled up to titrating DENDRAL increasingly more knowledge. The more you did that, the smarter the program ended up being. We had really great results.

The generalization was: in the understanding lies the power. That was the big idea. In my profession that is the huge, "Ah ha!," and it wasn't the method AI was being done previously. Sounds simple, however it's probably AI's most powerful generalization. [51]

The other professional systems discussed above came after DENDRAL. MYCIN exemplifies the timeless expert system architecture of a knowledge-base of guidelines combined to a symbolic thinking system, consisting of making use of certainty aspects to deal with unpredictability. GUIDON reveals how a specific knowledge base can be repurposed for a second application, tutoring, and is an example of a smart tutoring system, a specific sort of knowledge-based application. Clancey showed that it was not sufficient merely to utilize MYCIN's rules for guideline, however that he also required to include rules for discussion management and trainee modeling. [50] XCON is considerable because of the millions of dollars it saved DEC, which activated the specialist system boom where most all significant corporations in the US had professional systems groups, to record business proficiency, preserve it, and automate it:

By 1988, DEC's AI group had 40 specialist systems deployed, with more on the way. DuPont had 100 in use and 500 in advancement. Nearly every major U.S. corporation had its own Al group and was either utilizing or examining professional systems. [49]

Chess professional knowledge was encoded in Deep Blue. In 1996, this enabled IBM's Deep Blue, with the aid of symbolic AI, to win in a video game of chess against the world champ at that time, Garry Kasparov. [52]

Architecture of knowledge-based and expert systems

A crucial part of the system architecture for all specialist systems is the understanding base, which stores realities and rules for analytical. [53] The simplest method for a professional system knowledge base is just a collection or network of production rules. Production guidelines connect signs in a relationship similar to an If-Then statement. The expert system processes the guidelines to make deductions and to determine what additional details it requires, i.e. what concerns to ask, utilizing human-readable signs. For example, OPS5, CLIPS and their successors Jess and Drools operate in this style.

Expert systems can run in either a forward chaining - from evidence to conclusions - or backward chaining - from goals to required information and prerequisites - way. Advanced knowledge-based systems, such as Soar can also carry out meta-level thinking, that is reasoning about their own thinking in terms of deciding how to resolve problems and monitoring the success of problem-solving strategies.

Blackboard systems are a second kind of knowledge-based or skilled system architecture. They design a neighborhood of professionals incrementally contributing, where they can, to resolve a problem. The problem is represented in several levels of abstraction or alternate views. The professionals (understanding sources) offer their services whenever they acknowledge they can contribute. Potential problem-solving actions are represented on a program that is upgraded as the problem circumstance modifications. A controller decides how beneficial each contribution is, and who need to make the next analytical action. One example, the BB1 chalkboard architecture [54] was initially motivated by studies of how people plan to perform numerous tasks in a trip. [55] A development of BB1 was to use the exact same blackboard design to resolving its control issue, i.e., its controller carried out meta-level thinking with knowledge sources that kept track of how well a plan or the analytical was proceeding and might change from one strategy to another as conditions - such as objectives or times - altered. BB1 has been used in several domains: construction website preparation, intelligent tutoring systems, and real-time patient monitoring.

The second AI winter season, 1988-1993

At the height of the AI boom, companies such as Symbolics, LMI, and Texas Instruments were selling LISP machines particularly targeted to accelerate the development of AI applications and research. In addition, a number of synthetic intelligence business, such as Teknowledge and Inference Corporation, were offering professional system shells, training, and consulting to corporations.

Unfortunately, the AI boom did not last and Kautz finest explains the second AI winter season that followed:

Many factors can be used for the arrival of the second AI winter season. The hardware business failed when much more cost-efficient general Unix workstations from Sun together with excellent compilers for LISP and Prolog came onto the marketplace. Many business deployments of specialist systems were discontinued when they proved too pricey to preserve. Medical expert systems never caught on for several reasons: the trouble in keeping them approximately date; the challenge for medical specialists to discover how to utilize an overwelming variety of different specialist systems for different medical conditions; and maybe most crucially, the reluctance of doctors to rely on a computer-made diagnosis over their gut impulse, even for particular domains where the specialist systems might surpass an average physician. Equity capital cash deserted AI virtually overnight. The world AI conference IJCAI hosted an enormous and extravagant trade convention and thousands of nonacademic participants in 1987 in Vancouver; the main AI conference the following year, AAAI 1988 in St. Paul, was a little and strictly scholastic affair. [9]

Including more extensive foundations, 1993-2011

Uncertain thinking

Both analytical techniques and extensions to logic were tried.

One analytical approach, concealed Markov models, had actually already been popularized in the 1980s for speech recognition work. [11] Subsequently, in 1988, Judea Pearl popularized using Bayesian Networks as a sound but efficient method of handling uncertain reasoning with his publication of the book Probabilistic Reasoning in Intelligent Systems: Networks of Plausible Inference. [56] and Bayesian techniques were applied effectively in specialist systems. [57] Even later on, in the 1990s, statistical relational knowing, a technique that combines probability with sensible solutions, enabled probability to be combined with first-order reasoning, e.g., with either Markov Logic Networks or Probabilistic Soft Logic.

Other, non-probabilistic extensions to first-order logic to assistance were likewise attempted. For example, non-monotonic reasoning might be utilized with truth upkeep systems. A reality upkeep system tracked presumptions and reasons for all inferences. It enabled inferences to be withdrawn when presumptions were discovered to be inaccurate or a contradiction was obtained. Explanations might be attended to an inference by discussing which guidelines were used to create it and then continuing through underlying reasonings and rules all the way back to root presumptions. [58] Lofti Zadeh had presented a different type of extension to handle the representation of ambiguity. For instance, in choosing how "heavy" or "tall" a male is, there is frequently no clear "yes" or "no" answer, and a predicate for heavy or tall would instead return worths in between 0 and 1. Those worths represented to what degree the predicates held true. His fuzzy logic further offered a means for propagating mixes of these values through logical formulas. [59]

Artificial intelligence

Symbolic device learning approaches were examined to attend to the understanding acquisition traffic jam. Among the earliest is Meta-DENDRAL. Meta-DENDRAL utilized a generate-and-test strategy to produce plausible guideline hypotheses to evaluate against spectra. Domain and job understanding reduced the number of prospects tested to a manageable size. Feigenbaum explained Meta-DENDRAL as

... the culmination of my imagine the early to mid-1960s relating to theory formation. The conception was that you had an issue solver like DENDRAL that took some inputs and produced an output. In doing so, it used layers of understanding to steer and prune the search. That knowledge acted since we spoke with individuals. But how did individuals get the understanding? By looking at thousands of spectra. So we desired a program that would look at countless spectra and presume the understanding of mass spectrometry that DENDRAL might use to solve specific hypothesis formation issues. We did it. We were even able to publish new knowledge of mass spectrometry in the Journal of the American Chemical Society, providing credit only in a footnote that a program, Meta-DENDRAL, in fact did it. We had the ability to do something that had actually been a dream: to have a computer program created a brand-new and publishable piece of science. [51]

In contrast to the knowledge-intensive method of Meta-DENDRAL, Ross Quinlan developed a domain-independent technique to analytical classification, choice tree learning, beginning first with ID3 [60] and after that later on extending its abilities to C4.5. [61] The decision trees produced are glass box, interpretable classifiers, with human-interpretable category rules.

Advances were made in comprehending artificial intelligence theory, too. Tom Mitchell presented version space knowing which explains knowing as an explore a space of hypotheses, with upper, more basic, and lower, more particular, limits including all viable hypotheses constant with the examples seen so far. [62] More formally, Valiant presented Probably Approximately Correct Learning (PAC Learning), a structure for the mathematical analysis of artificial intelligence. [63]

Symbolic device finding out encompassed more than finding out by example. E.g., John Anderson supplied a cognitive model of human learning where ability practice results in a compilation of rules from a declarative format to a procedural format with his ACT-R cognitive architecture. For example, a trainee might discover to use "Supplementary angles are two angles whose steps sum 180 degrees" as numerous various procedural rules. E.g., one rule may state that if X and Y are supplemental and you know X, then Y will be 180 - X. He called his approach "knowledge compilation". ACT-R has been used successfully to design aspects of human cognition, such as finding out and retention. ACT-R is likewise used in intelligent tutoring systems, called cognitive tutors, to effectively teach geometry, computer system programming, and algebra to school children. [64]

Inductive logic shows was another approach to discovering that enabled logic programs to be manufactured from input-output examples. E.g., Ehud Shapiro's MIS (Model Inference System) could manufacture Prolog programs from examples. [65] John R. Koza used genetic algorithms to program synthesis to develop genetic programs, which he used to synthesize LISP programs. Finally, Zohar Manna and Richard Waldinger provided a more general method to program synthesis that synthesizes a practical program in the course of proving its specs to be right. [66]

As an option to logic, Roger Schank presented case-based reasoning (CBR). The CBR approach outlined in his book, Dynamic Memory, [67] focuses first on remembering crucial problem-solving cases for future usage and generalizing them where proper. When confronted with a brand-new problem, CBR retrieves the most comparable previous case and adjusts it to the specifics of the current issue. [68] Another alternative to logic, genetic algorithms and hereditary shows are based upon an evolutionary design of knowing, where sets of guidelines are encoded into populations, the guidelines govern the habits of individuals, and selection of the fittest prunes out sets of inappropriate guidelines over lots of generations. [69]

Symbolic artificial intelligence was used to finding out ideas, guidelines, heuristics, and analytical. Approaches, other than those above, include:

1. Learning from instruction or advice-i.e., taking human instruction, postured as recommendations, and identifying how to operationalize it in particular circumstances. For example, in a video game of Hearts, finding out exactly how to play a hand to "prevent taking points." [70] 2. Learning from exemplars-improving efficiency by accepting subject-matter specialist (SME) feedback throughout training. When problem-solving stops working, querying the expert to either learn a brand-new prototype for problem-solving or to find out a new explanation as to exactly why one exemplar is more relevant than another. For instance, the program Protos discovered to identify tinnitus cases by communicating with an audiologist. [71] 3. Learning by analogy-constructing issue services based on comparable problems seen in the past, and then modifying their options to fit a brand-new circumstance or domain. [72] [73] 4. Apprentice knowing systems-learning unique solutions to problems by observing human problem-solving. Domain knowledge discusses why unique solutions are correct and how the solution can be generalized. LEAP learned how to create VLSI circuits by observing human designers. [74] 5. Learning by discovery-i.e., developing jobs to carry out experiments and after that gaining from the results. Doug Lenat's Eurisko, for example, discovered heuristics to beat human players at the Traveller role-playing game for 2 years in a row. [75] 6. Learning macro-operators-i.e., looking for helpful macro-operators to be learned from series of fundamental problem-solving actions. Good macro-operators streamline problem-solving by allowing problems to be resolved at a more abstract level. [76]

Deep learning and neuro-symbolic AI 2011-now

With the rise of deep knowing, the symbolic AI approach has been compared to deep learning as complementary "... with parallels having actually been drawn often times by AI researchers in between Kahneman's research on human thinking and decision making - shown in his book Thinking, Fast and Slow - and the so-called "AI systems 1 and 2", which would in concept be modelled by deep knowing and symbolic thinking, respectively." In this view, symbolic thinking is more apt for deliberative reasoning, preparation, and explanation while deep learning is more apt for quick pattern acknowledgment in affective applications with loud information. [17] [18]

Neuro-symbolic AI: integrating neural and symbolic methods

Neuro-symbolic AI efforts to integrate neural and symbolic architectures in a way that addresses strengths and weaknesses of each, in a complementary style, in order to support robust AI capable of thinking, discovering, and cognitive modeling. As argued by Valiant [77] and lots of others, [78] the reliable construction of abundant computational cognitive models requires the mix of sound symbolic thinking and efficient (maker) learning models. Gary Marcus, likewise, argues that: "We can not construct rich cognitive designs in a sufficient, automatic method without the triune of hybrid architecture, abundant anticipation, and advanced techniques for reasoning.", [79] and in particular: "To develop a robust, knowledge-driven method to AI we must have the machinery of symbol-manipulation in our toolkit. Excessive of useful understanding is abstract to make do without tools that represent and control abstraction, and to date, the only equipment that we understand of that can control such abstract understanding dependably is the apparatus of symbol manipulation. " [80]

Henry Kautz, [19] Francesca Rossi, [81] and Bart Selman [82] have likewise argued for a synthesis. Their arguments are based upon a requirement to deal with the two type of believing discussed in Daniel Kahneman's book, Thinking, Fast and Slow. Kahneman explains human thinking as having 2 components, System 1 and System 2. System 1 is quickly, automatic, instinctive and unconscious. System 2 is slower, step-by-step, and explicit. System 1 is the kind utilized for pattern recognition while System 2 is far better suited for preparation, deduction, and deliberative thinking. In this view, deep knowing finest designs the first sort of believing while symbolic reasoning best models the 2nd kind and both are required.

Garcez and Lamb explain research study in this area as being ongoing for at least the past twenty years, [83] dating from their 2002 book on neurosymbolic knowing systems. [84] A series of workshops on neuro-symbolic thinking has been held every year considering that 2005, see http://www.neural-symbolic.org/ for details.

In their 2015 paper, Neural-Symbolic Learning and Reasoning: Contributions and Challenges, Garcez et al. argue that:

The integration of the symbolic and connectionist paradigms of AI has actually been pursued by a fairly little research study neighborhood over the last 2 years and has yielded numerous considerable results. Over the last years, neural symbolic systems have been shown efficient in getting rid of the so-called propositional fixation of neural networks, as McCarthy (1988) put it in reaction to Smolensky (1988 ); see also (Hinton, 1990). Neural networks were shown capable of representing modal and temporal logics (d'Avila Garcez and Lamb, 2006) and pieces of first-order logic (Bader, Hitzler, Hölldobler, 2008; d'Avila Garcez, Lamb, Gabbay, 2009). Further, neural-symbolic systems have actually been applied to a number of issues in the locations of bioinformatics, control engineering, software confirmation and adjustment, visual intelligence, ontology knowing, and computer games. [78]

Approaches for integration are differed. Henry Kautz's taxonomy of neuro-symbolic architectures, along with some examples, follows:

- Symbolic Neural symbolic-is the current technique of many neural designs in natural language processing, where words or subword tokens are both the supreme input and output of big language models. Examples include BERT, RoBERTa, and GPT-3.

- Symbolic [Neural] -is exemplified by AlphaGo, where symbolic strategies are used to call neural methods. In this case the symbolic approach is Monte Carlo tree search and the neural techniques discover how to evaluate game positions.

- Neural|Symbolic-uses a neural architecture to analyze affective data as signs and relationships that are then reasoned about symbolically.

- Neural: Symbolic → Neural-relies on symbolic reasoning to produce or identify training information that is consequently discovered by a deep knowing design, e.g., to train a neural model for symbolic computation by utilizing a Macsyma-like symbolic mathematics system to develop or identify examples.

- Neural _ Symbolic -uses a neural web that is produced from symbolic guidelines. An example is the Neural Theorem Prover, [85] which constructs a neural network from an AND-OR proof tree created from understanding base rules and terms. Logic Tensor Networks [86] likewise fall into this classification.

- Neural [Symbolic] -allows a neural design to straight call a symbolic thinking engine, e.g., to perform an action or assess a state.

Many key research concerns remain, such as:

- What is the very best way to incorporate neural and symbolic architectures? [87]- How should symbolic structures be represented within neural networks and extracted from them?

- How should sensible knowledge be discovered and reasoned about?

- How can abstract understanding that is tough to encode rationally be handled?

Techniques and contributions

This area offers an introduction of methods and contributions in a total context leading to many other, more comprehensive posts in Wikipedia. Sections on Artificial Intelligence and Uncertain Reasoning are covered previously in the history section.

AI shows languages

The key AI shows language in the US during the last symbolic AI boom period was LISP. LISP is the second oldest programming language after FORTRAN and was created in 1958 by John McCarthy. LISP offered the very first read-eval-print loop to support fast program development. Compiled functions might be freely mixed with translated functions. Program tracing, stepping, and breakpoints were likewise supplied, together with the capability to change worths or functions and continue from breakpoints or errors. It had the very first self-hosting compiler, meaning that the compiler itself was originally written in LISP and after that ran interpretively to assemble the compiler code.

Other key innovations originated by LISP that have spread to other programming languages include:

Garbage collection

Dynamic typing

Higher-order functions

Recursion

Conditionals

Programs were themselves data structures that other programs could operate on, allowing the simple meaning of higher-level languages.

In contrast to the US, in Europe the key AI shows language throughout that very same period was Prolog. Prolog offered an integrated shop of facts and provisions that might be queried by a read-eval-print loop. The shop could act as an understanding base and the clauses could act as guidelines or a restricted form of reasoning. As a subset of first-order reasoning Prolog was based on Horn provisions with a closed-world assumption-any realities not known were considered false-and a distinct name presumption for primitive terms-e.g., the identifier barack_obama was considered to describe precisely one object. Backtracking and marriage are built-in to Prolog.

Alain Colmerauer and Philippe Roussel are credited as the inventors of Prolog. Prolog is a type of logic programming, which was developed by Robert Kowalski. Its history was likewise affected by Carl Hewitt's PLANNER, an assertional database with pattern-directed invocation of approaches. For more information see the section on the origins of Prolog in the PLANNER short article.

Prolog is likewise a sort of declarative shows. The reasoning provisions that describe programs are straight analyzed to run the programs defined. No specific series of actions is needed, as holds true with crucial programming languages.

Japan championed Prolog for its Fifth Generation Project, intending to develop unique hardware for high efficiency. Similarly, LISP makers were built to run LISP, however as the second AI boom turned to bust these business could not take on new workstations that might now run LISP or Prolog natively at equivalent speeds. See the history section for more information.

Smalltalk was another prominent AI shows language. For instance, it presented metaclasses and, along with Flavors and CommonLoops, affected the Common Lisp Object System, or (CLOS), that is now part of Common Lisp, the existing standard Lisp dialect. CLOS is a Lisp-based object-oriented system that enables numerous inheritance, in addition to incremental extensions to both classes and metaclasses, hence providing a run-time meta-object protocol. [88]

For other AI shows languages see this list of programming languages for synthetic intelligence. Currently, Python, a multi-paradigm programs language, is the most popular programs language, partially due to its extensive plan library that supports data science, natural language processing, and deep learning. Python includes a read-eval-print loop, functional aspects such as higher-order functions, and object-oriented programming that consists of metaclasses.

Search

Search occurs in many sort of problem solving, consisting of planning, restriction fulfillment, and playing video games such as checkers, chess, and go. The finest known AI-search tree search algorithms are breadth-first search, depth-first search, A *, and Monte Carlo Search. Key search algorithms for Boolean satisfiability are WalkSAT, conflict-driven provision learning, and the DPLL algorithm. For adversarial search when playing video games, alpha-beta pruning, branch and bound, and minimax were early contributions.

Knowledge representation and thinking

Multiple various techniques to represent understanding and then reason with those representations have actually been examined. Below is a quick summary of methods to knowledge representation and automated reasoning.

Knowledge representation

Semantic networks, conceptual charts, frames, and reasoning are all approaches to modeling understanding such as domain knowledge, analytical knowledge, and the semantic meaning of language. Ontologies model crucial principles and their relationships in a domain. Example ontologies are YAGO, WordNet, and DOLCE. DOLCE is an example of an upper ontology that can be used for any domain while WordNet is a lexical resource that can likewise be deemed an ontology. YAGO includes WordNet as part of its ontology, to align truths extracted from Wikipedia with WordNet synsets. The Disease Ontology is an example of a medical ontology presently being used.

Description logic is a logic for automated category of ontologies and for spotting irregular category information. OWL is a language utilized to represent ontologies with description reasoning. Protégé is an ontology editor that can check out in OWL ontologies and then examine consistency with deductive classifiers such as such as HermiT. [89]

First-order logic is more basic than description reasoning. The automated theorem provers gone over listed below can show theorems in first-order reasoning. Horn clause logic is more limited than first-order logic and is utilized in logic programs languages such as Prolog. Extensions to first-order reasoning consist of temporal logic, to manage time; epistemic logic, to reason about agent understanding; modal reasoning, to deal with possibility and need; and probabilistic logics to manage logic and probability together.

Automatic theorem proving

Examples of automated theorem provers for first-order reasoning are:

Prover9.

ACL2.

Vampire.

Prover9 can be used in combination with the Mace4 design checker. ACL2 is a theorem prover that can handle evidence by induction and is a descendant of the Boyer-Moore Theorem Prover, likewise understood as Nqthm.

Reasoning in knowledge-based systems

Knowledge-based systems have an explicit understanding base, usually of rules, to improve reusability across domains by separating procedural code and domain understanding. A separate reasoning engine procedures guidelines and includes, deletes, or customizes an understanding store.

Forward chaining inference engines are the most common, and are seen in CLIPS and OPS5. Backward chaining occurs in Prolog, where a more minimal logical representation is used, Horn Clauses. Pattern-matching, specifically unification, is utilized in Prolog.

A more versatile sort of analytical occurs when reasoning about what to do next occurs, rather than merely selecting one of the readily available actions. This sort of meta-level reasoning is used in Soar and in the BB1 chalkboard architecture.

Cognitive architectures such as ACT-R may have additional capabilities, such as the capability to assemble regularly utilized knowledge into higher-level portions.

Commonsense thinking

Marvin Minsky initially proposed frames as a method of analyzing typical visual scenarios, such as an office, and Roger Schank extended this concept to scripts for typical routines, such as dining out. Cyc has tried to capture beneficial common-sense understanding and has "micro-theories" to handle particular sort of domain-specific reasoning.

Qualitative simulation, such as Benjamin Kuipers's QSIM, [90] estimates human reasoning about naive physics, such as what takes place when we heat up a liquid in a pot on the stove. We expect it to heat and potentially boil over, even though we might not understand its temperature level, its boiling point, or other information, such as air pressure.

Similarly, Allen's temporal interval algebra is a simplification of thinking about time and Region Connection Calculus is a simplification of thinking about spatial relationships. Both can be fixed with restraint solvers.

Constraints and constraint-based thinking

Constraint solvers perform a more limited kind of reasoning than first-order reasoning. They can simplify sets of spatiotemporal constraints, such as those for RCC or Temporal Algebra, together with resolving other kinds of puzzle issues, such as Wordle, Sudoku, cryptarithmetic problems, and so on. Constraint logic programming can be used to solve scheduling issues, for example with constraint dealing with rules (CHR).

Automated preparation

The General Problem Solver (GPS) cast planning as analytical used means-ends analysis to develop strategies. STRIPS took a different method, seeing preparation as theorem proving. Graphplan takes a least-commitment approach to planning, instead of sequentially selecting actions from a preliminary state, working forwards, or an objective state if working backwards. Satplan is a technique to preparing where a preparation problem is decreased to a Boolean satisfiability problem.

Natural language processing

Natural language processing concentrates on dealing with language as information to perform jobs such as recognizing subjects without always understanding the intended significance. Natural language understanding, in contrast, constructs a meaning representation and uses that for additional processing, such as addressing questions.

Parsing, tokenizing, spelling correction, part-of-speech tagging, noun and verb phrase chunking are all elements of natural language processing long managed by symbolic AI, however given that improved by deep learning approaches. In symbolic AI, discourse representation theory and first-order logic have been used to represent sentence meanings. Latent semantic analysis (LSA) and specific semantic analysis also provided vector representations of files. In the latter case, vector components are interpretable as ideas called by Wikipedia posts.

New deep learning techniques based upon Transformer models have actually now eclipsed these earlier symbolic AI approaches and achieved state-of-the-art performance in natural language processing. However, Transformer models are opaque and do not yet produce human-interpretable semantic representations for sentences and files. Instead, they produce task-specific vectors where the significance of the vector parts is nontransparent.

Agents and multi-agent systems

Agents are autonomous systems embedded in an environment they view and act upon in some sense. Russell and Norvig's basic textbook on expert system is organized to show representative architectures of increasing elegance. [91] The sophistication of agents varies from basic reactive agents, to those with a model of the world and automated preparation abilities, perhaps a BDI representative, i.e., one with beliefs, desires, and objectives - or additionally a support learning design learned with time to select actions - approximately a mix of alternative architectures, such as a neuro-symbolic architecture [87] that consists of deep knowing for understanding. [92]

On the other hand, a multi-agent system consists of several agents that interact amongst themselves with some inter-agent interaction language such as Knowledge Query and Manipulation Language (KQML). The representatives need not all have the exact same internal architecture. Advantages of multi-agent systems consist of the capability to divide work amongst the agents and to increase fault tolerance when representatives are lost. Research issues consist of how agents reach agreement, distributed problem solving, multi-agent knowing, multi-agent preparation, and distributed restraint optimization.

Controversies emerged from at an early stage in symbolic AI, both within the field-e.g., between logicists (the pro-logic "neats") and non-logicists (the anti-logic "scruffies")- and between those who welcomed AI however turned down symbolic approaches-primarily connectionists-and those outside the field. Critiques from outside of the field were mainly from theorists, on intellectual grounds, however also from funding companies, especially throughout the 2 AI winters.

The Frame Problem: knowledge representation difficulties for first-order logic

Limitations were found in utilizing basic first-order logic to factor about vibrant domains. Problems were found both with concerns to mentioning the prerequisites for an action to be successful and in providing axioms for what did not alter after an action was carried out.

McCarthy and Hayes presented the Frame Problem in 1969 in the paper, "Some Philosophical Problems from the Standpoint of Artificial Intelligence." [93] An easy example occurs in "proving that one person could enter into conversation with another", as an axiom asserting "if a person has a telephone he still has it after searching for a number in the telephone directory" would be required for the reduction to prosper. Similar axioms would be needed for other domain actions to define what did not change.

A comparable issue, called the Qualification Problem, takes place in trying to mention the prerequisites for an action to be successful. A limitless number of pathological conditions can be envisioned, e.g., a banana in a tailpipe could prevent an automobile from running correctly.

McCarthy's technique to fix the frame issue was circumscription, a kind of non-monotonic logic where reductions could be made from actions that need just define what would alter while not having to clearly specify everything that would not alter. Other non-monotonic reasonings supplied truth upkeep systems that revised beliefs causing contradictions.

Other methods of managing more open-ended domains consisted of probabilistic reasoning systems and device learning to discover brand-new concepts and guidelines. McCarthy's Advice Taker can be considered as a motivation here, as it might incorporate brand-new knowledge supplied by a human in the kind of assertions or rules. For instance, experimental symbolic machine discovering systems explored the ability to take top-level natural language advice and to interpret it into domain-specific actionable rules.

Similar to the issues in managing dynamic domains, common-sense thinking is also difficult to record in formal reasoning. Examples of sensible thinking consist of implicit thinking about how people think or general understanding of daily events, objects, and living creatures. This type of understanding is taken for granted and not deemed noteworthy. Common-sense thinking is an open area of research and challenging both for symbolic systems (e.g., Cyc has attempted to record key parts of this knowledge over more than a years) and neural systems (e.g., self-driving cars that do not understand not to drive into cones or not to strike pedestrians strolling a bike).

McCarthy viewed his Advice Taker as having sensible, however his meaning of common-sense was various than the one above. [94] He defined a program as having good sense "if it immediately deduces for itself an adequately broad class of instant repercussions of anything it is told and what it already knows. "

Connectionist AI: philosophical difficulties and sociological disputes

Connectionist methods include earlier work on neural networks, [95] such as perceptrons; operate in the mid to late 80s, such as Danny Hillis's Connection Machine and Yann LeCun's advances in convolutional neural networks; to today's more innovative techniques, such as Transformers, GANs, and other work in deep learning.

Three philosophical positions [96] have actually been outlined amongst connectionists:

1. Implementationism-where connectionist architectures execute the abilities for symbolic processing,

2. Radical connectionism-where symbolic processing is turned down completely, and connectionist architectures underlie intelligence and are fully adequate to discuss it,

3. Moderate connectionism-where symbolic processing and connectionist architectures are deemed complementary and both are needed for intelligence

Olazaran, in his sociological history of the controversies within the neural network community, explained the moderate connectionism view as essentially compatible with present research study in neuro-symbolic hybrids:

The 3rd and last position I wish to take a look at here is what I call the moderate connectionist view, a more diverse view of the current debate between connectionism and symbolic AI. Among the scientists who has elaborated this position most explicitly is Andy Clark, a thinker from the School of Cognitive and Computing Sciences of the University of Sussex (Brighton, England). Clark protected hybrid (partly symbolic, partly connectionist) systems. He declared that (a minimum of) 2 type of theories are required in order to study and model cognition. On the one hand, for some information-processing tasks (such as pattern recognition) connectionism has benefits over symbolic designs. But on the other hand, for other cognitive processes (such as serial, deductive reasoning, and generative sign control processes) the symbolic paradigm uses appropriate models, and not only "approximations" (contrary to what extreme connectionists would claim). [97]

Gary Marcus has actually declared that the animus in the deep learning neighborhood against symbolic approaches now may be more sociological than philosophical:

To believe that we can simply abandon symbol-manipulation is to suspend shock.

And yet, for the many part, that's how most current AI proceeds. Hinton and many others have attempted difficult to banish signs altogether. The deep learning hope-seemingly grounded not a lot in science, but in a sort of historic grudge-is that intelligent habits will emerge purely from the confluence of huge data and deep knowing. Where classical computer systems and software application resolve jobs by defining sets of symbol-manipulating rules devoted to specific tasks, such as modifying a line in a word processor or carrying out a computation in a spreadsheet, neural networks usually attempt to fix jobs by statistical approximation and finding out from examples.

According to Marcus, Geoffrey Hinton and his colleagues have actually been vehemently "anti-symbolic":

When deep knowing reemerged in 2012, it was with a type of take-no-prisoners mindset that has identified the majority of the last years. By 2015, his hostility toward all things signs had fully crystallized. He provided a talk at an AI workshop at Stanford comparing symbols to aether, one of science's biggest errors.

...

Ever since, his anti-symbolic campaign has only increased in strength. In 2016, Yann LeCun, Bengio, and Hinton composed a manifesto for deep learning in among science's most essential journals, Nature. It closed with a direct attack on symbol manipulation, calling not for reconciliation but for straight-out replacement. Later, Hinton told a gathering of European Union leaders that investing any additional cash in symbol-manipulating techniques was "a huge error," likening it to investing in internal combustion engines in the period of electric cars. [98]

Part of these conflicts might be because of uncertain terms:

Turing award winner Judea Pearl provides a critique of device knowing which, regrettably, conflates the terms maker learning and deep learning. Similarly, when Geoffrey Hinton describes symbolic AI, the connotation of the term tends to be that of specialist systems dispossessed of any capability to learn. The usage of the terms needs information. Machine learning is not confined to association guideline mining, c.f. the body of work on symbolic ML and relational learning (the differences to deep learning being the option of representation, localist logical rather than distributed, and the non-use of gradient-based knowing algorithms). Equally, symbolic AI is not practically production guidelines composed by hand. A proper definition of AI concerns knowledge representation and reasoning, autonomous multi-agent systems, preparation and argumentation, along with learning. [99]

Situated robotics: the world as a design

Another review of symbolic AI is the embodied cognition method:

The embodied cognition method claims that it makes no sense to think about the brain separately: cognition occurs within a body, which is embedded in an environment. We require to study the system as a whole; the brain's operating exploits consistencies in its environment, consisting of the rest of its body. Under the embodied cognition method, robotics, vision, and other sensors become central, not peripheral. [100]

Rodney Brooks developed behavior-based robotics, one method to embodied cognition. Nouvelle AI, another name for this method, is considered as an alternative to both symbolic AI and connectionist AI. His approach declined representations, either symbolic or distributed, as not just unneeded, however as harmful. Instead, he created the subsumption architecture, a layered architecture for embodied agents. Each layer accomplishes a various function and must operate in the real life. For example, the first robot he explains in Intelligence Without Representation, has three layers. The bottom layer interprets sonar sensing units to avoid things. The middle layer triggers the robotic to wander around when there are no barriers. The top layer causes the robot to go to more far-off places for further exploration. Each layer can momentarily prevent or suppress a lower-level layer. He criticized AI scientists for defining AI issues for their systems, when: "There is no tidy division between understanding (abstraction) and reasoning in the real world." [101] He called his robots "Creatures" and each layer was "composed of a fixed-topology network of simple finite state devices." [102] In the Nouvelle AI approach, "First, it is vitally important to test the Creatures we develop in the real life; i.e., in the very same world that we humans inhabit. It is dreadful to fall into the temptation of testing them in a simplified world first, even with the finest objectives of later moving activity to an unsimplified world." [103] His emphasis on real-world testing remained in contrast to "Early operate in AI focused on games, geometrical issues, symbolic algebra, theorem proving, and other formal systems" [104] and the usage of the blocks world in symbolic AI systems such as SHRDLU.

Current views

Each approach-symbolic, connectionist, and behavior-based-has benefits, however has been slammed by the other methods. Symbolic AI has been criticized as disembodied, responsible to the credentials problem, and poor in dealing with the affective problems where deep learning excels. In turn, connectionist AI has actually been criticized as poorly suited for deliberative detailed issue resolving, including knowledge, and dealing with planning. Finally, Nouvelle AI excels in reactive and real-world robotics domains however has actually been slammed for problems in including knowing and understanding.

Hybrid AIs integrating several of these methods are presently deemed the course forward. [19] [81] [82] Russell and Norvig conclude that:

Overall, Dreyfus saw areas where AI did not have total responses and stated that Al is therefore impossible; we now see a number of these exact same areas undergoing continued research and development leading to increased capability, not impossibility. [100]

Artificial intelligence.

Automated planning and scheduling

Automated theorem proving

Belief revision

Case-based reasoning

Cognitive architecture

Cognitive science

Connectionism

Constraint shows

Deep learning

First-order logic

GOFAI

History of expert system

Inductive reasoning programming

Knowledge-based systems

Knowledge representation and thinking

Logic programming

Machine knowing

Model monitoring

Model-based reasoning

Multi-agent system

Natural language processing

Neuro-symbolic AI

Ontology

Philosophy of expert system

Physical symbol systems hypothesis

Semantic Web

Sequential pattern mining

Statistical relational learning

Symbolic mathematics

YAGO ontology

WordNet

Notes

^ McCarthy once stated: "This is AI, so we don't care if it's mentally real". [4] McCarthy restated his position in 2006 at the AI@50 conference where he said "Expert system is not, by meaning, simulation of human intelligence". [28] Pamela McCorduck composes that there are "2 major branches of synthetic intelligence: one targeted at producing smart behavior regardless of how it was accomplished, and the other focused on modeling smart processes discovered in nature, especially human ones.", [29] Stuart Russell and Peter Norvig composed "Aeronautical engineering texts do not define the objective of their field as making 'devices that fly so exactly like pigeons that they can deceive even other pigeons.'" [30] Citations

^ Garnelo, Marta; Shanahan, Murray (October 2019). "Reconciling deep knowing with symbolic expert system: representing objects and relations". Current Opinion in Behavioral Sciences. 29: 17-23. doi:10.1016/ j.cobeha.2018.12.010. hdl:10044/ 1/67796.

^ Thomason, Richmond (February 27, 2024). "Logic-Based Expert System". In Zalta, Edward N. (ed.). Stanford Encyclopedia of Philosophy.

^ Garnelo, Marta; Shanahan, Murray (2019-10-01). "Reconciling deep learning with symbolic expert system: representing objects and relations". Current Opinion in Behavioral Sciences. 29: 17-23. doi:10.1016/ j.cobeha.2018.12.010. hdl:10044/ 1/67796. S2CID 72336067.

^ a b Kolata 1982.

^ Kautz 2022, pp. 107-109.

^ a b Russell & Norvig 2021, p. 19.

^ a b Russell & Norvig 2021, pp. 22-23.

^ a b Kautz 2022, pp. 109-110.

^ a b c Kautz 2022, p. 110.

^ Kautz 2022, pp. 110-111.

^ a b Russell & Norvig 2021, p. 25.

^ Kautz 2022, p. 111.

^ Kautz 2020, pp. 110-111.

^ Rumelhart, David E.; Hinton, Geoffrey E.; Williams, Ronald J. (1986 ). "Learning representations by back-propagating mistakes". Nature. 323 (6088 ): 533-536. Bibcode:1986 Natur.323..533 R. doi:10.1038/ 323533a0. ISSN 1476-4687. S2CID 205001834.

^ LeCun, Y.; Boser, B.; Denker, I.; Henderson, D.; Howard, R.; Hubbard, W.; Tackel, L. (1989 ). "Backpropagation Applied to Handwritten Postal Code Recognition". Neural Computation. 1 (4 ): 541-551. doi:10.1162/ neco.1989.1.4.541. S2CID 41312633.

^ a b Marcus & Davis 2019.

^ a b Rossi, Francesca. "Thinking Fast and Slow in AI". AAAI. Retrieved 5 July 2022.

^ a b Selman, Bart. "AAAI Presidential Address: The State of AI". AAAI. Retrieved 5 July 2022.

^ a b c Kautz 2020.

^ Kautz 2022, p. 106.

^ Newell & Simon 1972.

^ & McCorduck 2004, pp. 139-179, 245-250, 322-323 (EPAM).

^ Crevier 1993, pp. 145-149.

^ McCorduck 2004, pp. 450-451.

^ Crevier 1993, pp. 258-263.

^ a b Kautz 2022, p. 108.

^ Russell & Norvig 2021, p. 9 (logicist AI), p. 19 (McCarthy's work).

^ Maker 2006.

^ McCorduck 2004, pp. 100-101.

^ Russell & Norvig 2021, p. 2.

^ McCorduck 2004, pp. 251-259.

^ Crevier 1993, pp. 193-196.

^ Howe 1994.

^ McCorduck 2004, pp. 259-305.

^ Crevier 1993, pp. 83-102, 163-176.

^ McCorduck 2004, pp. 421-424, 486-489.

^ Crevier 1993, p. 168.

^ McCorduck 2004, p. 489.

^ Crevier 1993, pp. 239-243.

^ Russell & Norvig 2021, p. 316, 340.

^ Kautz 2022, p. 109.

^ Russell & Norvig 2021, p. 22.

^ McCorduck 2004, pp. 266-276, 298-300, 314, 421.

^ Shustek, Len (June 2010). "An interview with Ed Feigenbaum". Communications of the ACM. 53 (6 ): 41-45. doi:10.1145/ 1743546.1743564. ISSN 0001-0782. S2CID 10239007. Retrieved 2022-07-14.

^ Lenat, Douglas B; Feigenbaum, Edward A (1988 ). "On the thresholds of understanding". Proceedings of the International Workshop on Expert System for Industrial Applications: 291-300. doi:10.1109/ AIIA.1988.13308. S2CID 11778085.

^ Russell & Norvig 2021, pp. 22-24.

^ McCorduck 2004, pp. 327-335, 434-435.

^ Crevier 1993, pp. 145-62, 197-203.

^ a b Russell & Norvig 2021, p. 23.

^ a b Clancey 1987.

^ a b Shustek, Len (2010 ). "An interview with Ed Feigenbaum". Communications of the ACM. 53 (6 ): 41-45. doi:10.1145/ 1743546.1743564. ISSN 0001-0782. S2CID 10239007. Retrieved 2022-08-05.

^ "The fascination with AI: what is artificial intelligence?". IONOS Digitalguide. Retrieved 2021-12-02.

^ Hayes-Roth, Murray & Adelman 2015.

^ Hayes-Roth, Barbara (1985 ). "A chalkboard architecture for control". Artificial Intelligence. 26 (3 ): 251-321. doi:10.1016/ 0004-3702( 85 )90063-3.

^ Hayes-Roth, Barbara (1980 ). Human Planning Processes. RAND.

^ Pearl 1988.

^ Spiegelhalter et al. 1993.

^ Russell & Norvig 2021, pp. 335-337.

^ Russell & Norvig 2021, p. 459.

^ Quinlan, J. Ross. "Chapter 15: Learning Efficient Classification Procedures and their Application to Chess End Games". In Michalski, Carbonell & Mitchell (1983 ).

^ Quinlan, J. Ross (1992-10-15). C4.5: Programs for Machine Learning (1st ed.). San Mateo, Calif: Morgan Kaufmann. ISBN 978-1-55860-238-0.

^ Mitchell, Tom M.; Utgoff, Paul E.; Banerji, Ranan. "Chapter 6: Learning by Experimentation: Acquiring and Refining Problem-Solving Heuristics". In Michalski, Carbonell & Mitchell (1983 ).

^ Valiant, L. G. (1984-11-05). "A theory of the learnable". Communications of the ACM. 27 (11 ): 1134-1142. doi:10.1145/ 1968.1972. ISSN 0001-0782. S2CID 12837541.

^ Koedinger, K. R.; Anderson, J. R.; Hadley, W. H.; Mark, M. A.; others (1997 ). "Intelligent tutoring goes to school in the big city". International Journal of Artificial Intelligence in Education (IJAIED). 8: 30-43. Retrieved 2012-08-18.

^ Shapiro, Ehud Y (1981 ). "The Model Inference System". Proceedings of the 7th global joint conference on Expert system. IJCAI. Vol. 2. p. 1064.

^ Manna, Zohar; Waldinger, Richard (1980-01-01). "A Deductive Approach to Program Synthesis". ACM Trans. Program. Lang. Syst. 2 (1 ): 90-121. doi:10.1145/ 357084.357090. S2CID 14770735.

^ Schank, Roger C. (1983-01-28). Dynamic Memory: A Theory of Reminding and Learning in Computers and People. Cambridge Cambridgeshire: New York: Cambridge University Press. ISBN 978-0-521-27029-8.

^ Hammond, Kristian J. (1989-04-11). Case-Based Planning: Viewing Planning as a Memory Task. Boston: Academic Press. ISBN 978-0-12-322060-8.

^ Koza, John R. (1992-12-11). Genetic Programming: On the Programming of Computers by Means of Natural Selection (1st ed.). Cambridge, Mass: A Bradford Book. ISBN 978-0-262-11170-6.

^ Mostow, David Jack. "Chapter 12: Machine Transformation of Advice into a Heuristic Search Procedure". In Michalski, Carbonell & Mitchell (1983 ).

^ Bareiss, Ray; Porter, Bruce; Wier, Craig. "Chapter 4: Protos: An Exemplar-Based Learning Apprentice". In Michalski, Carbonell & Mitchell (1986 ), pp. 112-139.

^ Carbonell, Jaime. "Chapter 5: Learning by Analogy: Formulating and Generalizing Plans from Past Experience". In Michalski, Carbonell & Mitchell (1983 ), pp. 137-162.

^ Carbonell, Jaime. "Chapter 14: Derivational Analogy: A Theory of Reconstructive Problem Solving and Expertise Acquisition". In Michalski, Carbonell & Mitchell (1986 ), pp. 371-392.

^ Mitchell, Tom; Mabadevan, Sridbar; Steinberg, Louis. "Chapter 10: LEAP: A Learning Apprentice for VLSI Design". In Kodratoff & Michalski (1990 ), pp. 271-289.

^ Lenat, Douglas. "Chapter 9: The Role of Heuristics in Learning by Discovery: Three Case Studies". In Michalski, Carbonell & Mitchell (1983 ), pp. 243-306.

^ Korf, Richard E. (1985 ). Learning to Solve Problems by Searching for Macro-Operators. Research Notes in Expert System. Pitman Publishing. ISBN 0-273-08690-1.

^ Valiant 2008.

^ a b Garcez et al. 2015.

^ Marcus 2020, p. 44.

^ Marcus 2020, p. 17.

^ a b Rossi 2022.

^ a b Selman 2022.

^ Garcez & Lamb 2020, p. 2.

^ Garcez et al. 2002.

^ Rocktäschel, Tim; Riedel, Sebastian (2016 ). "Learning Knowledge Base Inference with Neural Theorem Provers". Proceedings of the fifth Workshop on Automated Knowledge Base Construction. San Diego, CA: Association for Computational Linguistics. pp. 45-50. doi:10.18653/ v1/W16 -1309. Retrieved 2022-08-06.

^ Serafini, Luciano; Garcez, Artur d'Avila (2016 ), Logic Tensor Networks: Deep Learning and Logical Reasoning from Data and Knowledge, arXiv:1606.04422.

^ a b Garcez, Artur d'Avila; Lamb, Luis C.; Gabbay, Dov M. (2009 ). Neural-Symbolic Cognitive Reasoning (1st ed.). Berlin-Heidelberg: Springer. Bibcode:2009 nscr.book ... D. doi:10.1007/ 978-3-540-73246-4. ISBN 978-3-540-73245-7. S2CID 14002173.

^ Kiczales, Gregor; Rivieres, Jim des; Bobrow, Daniel G. (1991-07-30). The Art of the Metaobject Protocol (1st ed.). Cambridge, Mass: The MIT Press. ISBN 978-0-262-61074-2.

^ Motik, Boris; Shearer, Rob; Horrocks, Ian (2009-10-28). "Hypertableau Reasoning for Description Logics". Journal of Expert System Research. 36: 165-228. arXiv:1401.3485. doi:10.1613/ jair.2811. ISSN 1076-9757. S2CID 190609.

^ Kuipers, Benjamin (1994 ). Qualitative Reasoning: Modeling and Simulation with Incomplete Knowledge. MIT Press. ISBN 978-0-262-51540-5.

^ Russell & Norvig 2021.

^ Leo de Penning, Artur S. d'Avila Garcez, Luís C. Lamb, John-Jules Ch. Meyer: "A Neural-Symbolic Cognitive Agent for Online Learning and Reasoning." IJCAI 2011: 1653-1658.

^ McCarthy & Hayes 1969.

^ McCarthy 1959.

^ Nilsson 1998, p. 7.

^ Olazaran 1993, pp. 411-416.

^ Olazaran 1993, pp. 415-416.

^ Marcus 2020, p. 20.

^ Garcez & Lamb 2020, p. 8.

^ a b Russell & Norvig 2021, p. 982.

^ Brooks 1991, p. 143.

^ Brooks 1991, p. 151.

^ Brooks 1991, p. 150.

^ Brooks 1991, p. 142.

References

Brooks, Rodney A. (1991 ). "Intelligence without representation". Artificial Intelligence. 47 (1 ): 139-159. doi:10.1016/ 0004-3702( 91 )90053-M. ISSN 0004-3702. S2CID 207507849. Retrieved 2022-09-13.

Clancey, William (1987 ). Knowledge-Based Tutoring: The GUIDON Program (MIT Press Series in Expert System) (Hardcover ed.).

Crevier, Daniel (1993 ). AI: The Tumultuous Search for Artificial Intelligence. New York City, NY: BasicBooks. ISBN 0-465-02997-3.

Dreyfus, Hubert L (1981 ). "From micro-worlds to understanding representation: AI at an impasse" (PDF). Mind Design. MIT Press, Cambridge, MA: 161-204.

Garcez, Artur S. d'Avila; Broda, Krysia; Gabbay, Dov M.; Gabbay, Augustus de Morgan Professor of Logic Dov M. (2002 ). Neural-Symbolic Learning Systems: Foundations and Applications. Springer Science & Business Media. ISBN 978-1-85233-512-0.

Garcez, Artur; Besold, Tarek; De Raedt, Luc; Földiák, Peter; Hitzler, Pascal; Icard, Thomas; Kühnberger, Kai-Uwe; Lamb, Luís; Miikkulainen, Risto; Silver, Daniel (2015 ). Neural-Symbolic Learning and Reasoning: Contributions and Challenges. AAI Spring Symposium - Knowledge Representation and Reasoning: Integrating Symbolic and Neural Approaches. Stanford, CA: AAAI Press. doi:10.13140/ 2.1.1779.4243.

Garcez, Artur d'Avila; Gori, Marco; Lamb, Luis C.; Serafini, Luciano; Spranger, Michael; Tran, Son N. (2019 ), Neural-Symbolic Computing: An Efficient Methodology for Principled Integration of Artificial Intelligence and Reasoning, arXiv:1905.06088.

Garcez, Artur d'Avila; Lamb, Luis C. (2020 ), Neurosymbolic AI: The 3rd Wave, arXiv:2012.05876.

Haugeland, John (1985 ), Expert System: The Very Idea, Cambridge, Mass: MIT Press, ISBN 0-262-08153-9.

Hayes-Roth, Frederick; Murray, William; Adelman, Leonard (2015 ). "Expert systems". AccessScience. doi:10.1036/ 1097-8542.248550.

Honavar, Vasant; Uhr, Leonard (1994 ). Symbolic Expert System, Connectionist Networks & Beyond (Technical report). Iowa State University Digital Repository, Computer Science Technical Reports. 76. p. 6.

Honavar, Vasant (1995 ). Symbolic Artificial Intelligence and Numeric Artificial Neural Networks: Towards a Resolution of the Dichotomy. The Springer International Series In Engineering and Computer Technology. Springer US. pp. 351-388. doi:10.1007/ 978-0-585-29599-2_11.

Howe, J. (November 1994). "Artificial Intelligence at Edinburgh University: a Point of view". Archived from the original on 15 May 2007. Retrieved 30 August 2007.

Kautz, Henry (2020-02-11). The Third AI Summer, Henry Kautz, AAAI 2020 Robert S. Engelmore Memorial Award Lecture. Retrieved 2022-07-06.

Kautz, Henry (2022 ). "The Third AI Summer: AAAI Robert S. Engelmore Memorial Lecture". AI Magazine. 43 (1 ): 93-104. doi:10.1609/ aimag.v43i1.19122. ISSN 2371-9621. S2CID 248213051. Retrieved 2022-07-12.

Kodratoff, Yves; Michalski, Ryszard, eds. (1990 ). Artificial intelligence: an Artificial Intelligence Approach. Vol. III. San Mateo, Calif.: Morgan Kaufman. ISBN 0-934613-09-5. OCLC 893488404.

Kolata, G. (1982 ). "How can computers get sound judgment?". Science. 217 (4566 ): 1237-1238. Bibcode:1982 Sci ... 217.1237 K. doi:10.1126/ science.217.4566.1237. PMID 17837639.

Maker, Meg Houston (2006 ). "AI@50: AI Past, Present, Future". Dartmouth College. Archived from the original on 3 January 2007. Retrieved 16 October 2008.

Marcus, Gary; Davis, Ernest (2019 ). Rebooting AI: Building Expert System We Can Trust. New York: Pantheon Books. ISBN 9781524748258. OCLC 1083223029.

Marcus, Gary (2020 ), The Next Decade in AI: Four Steps Towards Robust Artificial Intelligence, arXiv:2002.06177.

McCarthy, John (1959 ). PROGRAMS WITH SOUND JUDGMENT. Symposium on Mechanization of Thought Processes. NATIONAL PHYSICAL LABORATORY, TEDDINGTON, UK. p. 8.

McCarthy, John; Hayes, Patrick (1969 ). "Some Philosophical Problems From the Standpoint of Artificial Intelligence". Machine Intelligence 4. B. Meltzer, Donald Michie (eds.): 463-502.

McCorduck, Pamela (2004 ), Machines Who Think (second ed.), Natick, Massachusetts: A. K. Peters, ISBN 1-5688-1205-1.